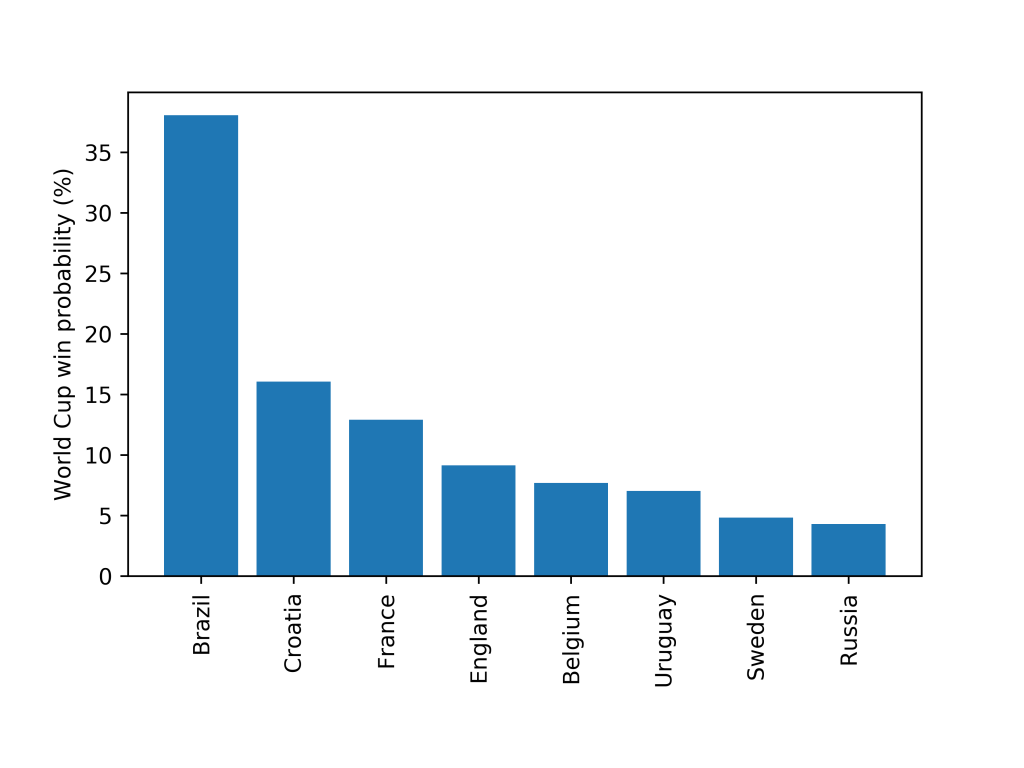

My model now gives Brazil odds of >1/3 that they will win the World Cup.

My World Cup prediction model is described here and my validation methodology here.

The biggest shock of the Round of 16 was the elimination of Spain by Russia. The gulf in quality between the teams (Spain’s Elo rating is 2010, compared to Russia’s 1710) would imply it should have been an “easy” win for Spain. However the Russian home advantage clearly had an impact. Assuming Spain and Russia played in a neutral venue my model gave Spain a 74% chance of a win. With the Russian home advantage my model drops Spain’s chance to 65% (still a clear favourite though!). Before the tournament started my model gave Spain an 11% chance of winning the tournament (but it didn’t account for the fact they fired their manager 2 days before the tournament started).

The model now gives Brazil a 37% chance of winning the World Cup, and for the first time makes Croatia the 2nd favourite with >15%. England are up to about 10%, and interestingly are now above Belgium. This is because in the simulation Belgium get to fewer finals (12.5%) than England (28%). The model gives England a 67% chance of getting to their first semifinal since 1990!

As I’ve said in my previous posts model validation is very important. Is there any reason you should trust this model at all? The updated Brier score (which I describe here) for the model is 0.565, however the Bookies Brier score is down to 0.550 so the model is doing slightly worse than the Bookies (which is not surprising!). But still pretty good, all things considered (I am separately also predicting each of the results of the tournament and the Brier score for my prediction is 0.626, much worse!).